Artificial intelligence and natural hazard prevention

PDF

Artificial intelligence represents a profound revolution for Sapiens, on a par with the discovery of agriculture 8,500 years ago. But what is artificial intelligence? How does it work? Why is it such a revolution? How does it fit into the evolution of humanity? Why do artificial intelligence (AI) algorithms – just like our brains – work so well? Is AI creative? And how do certain forms of consciousness appear in AI? What are Large Language Models (LLMs) and Foundation Models? These are some of the questions we will answer in this article, while also discussing a rapidly developing field of application: natural hazards, particularly those caused by gravity (landslides, mudslides, rockfalls, avalanches).

1. What is artificial intelligence?

1.1 Neural networks

1.2. Learning and data centres

But our brain would be nothing without education and learning, which have enabled it to accumulate experience, structure it and respond appropriately to the questions it encounters – at least within the scope of its experience (“I have learnt English, but I would not be able to understand a document written in German”).

But how does the network learn? Essentially through weights associated with the links connecting the nodes. The more important a link is in terms of developing the response, the greater the weight assigned to that link.

How are these weights determined? Babies/children/adolescents learn from a number of situations provided by experience or study, … as well as through trial and error in finding the right response to these situations (“to solve a puzzle, I learned that I had to make sure the pieces of the puzzle fit together perfectly and that I could help myself by looking at the picture on the front”). In the same way, the neural network will learn from a set of data provided to it with the associated known answers, also provided. The data-input and output set constitutes big data, stored in data centres. Obviously, the larger the database, the more relevant the AI will have learned: hence the race forward in increasingly gigantic data centres to collect the hundreds of billions of data points necessary for the “generative” (creative, predictive) relevance of AI. It should be noted that these data centres consume an excessive amount of electrical energy and generate an equally excessive amount of heat, but they are essential for the major fields of application of AI…

1.3. How are the weights attached to the links determined?

Essentially through numerical methods (i.e. composed of algorithms – an algorithm being a sequence of instructions written in such a way as to be understandable by a computer) known as “retro-gradient” methods. The gradient of a function is a generalised derivative which, in geometry, represents the slope of the curve representing the function. Knowing the inputs and outputs for a large number of cases, we start from the output and try to find the inputs by assigning weights to the links. When, for all known “input + output” cases from the database, the numerician considers that the calculated accuracy is sufficient (by comparing the recalculated data with the data provided), they consider that the code has learned: this is learning. Then, new data can be entered into the AI code, and the output provided by the code will be remarkable (generally much better than what the Sapiens brain would have formulated) – provided that the database was sufficiently large and accurate (unbiased) and that the number of nodes was sufficiently sized. Both the network and the learning must therefore be relevant.

2. How does AI work?

In the early years of AI development (until the 1990s), few nodes (about ten) and few links were implemented, and AI techniques proved disappointing: they were just one interpolation method among many. Data is entered, and if a question is asked in the middle of the data, a good answer is obtained. Nothing very new in the mathematical field of interpolation methods.

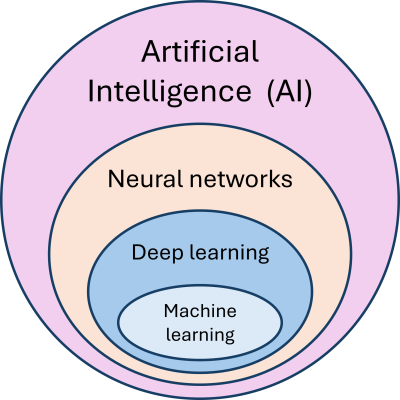

2.1. Deep learning

Everything changed when we moved to several million links (this is called deep learning). Today, ChatGPT works with 1,000 billion links, apparently… Everything changed because we saw the emergence in AI of a remarkable ability of the human brain: creativity. The experiment that first demonstrated this to the international scientific community was… the game of GO. Everyone remembers that the computer beat the world chess champions simply because its memory capacity was far superior to that of the human brain. The game of GO is of a different nature, as it requires the definition of a strategy. It is no longer a simple question of memory, because the number of possible moves in the game of GO is on the order of magnitude of the number of atoms in our universe (1080or 1 followed by 80 zeros). The ALPHAGO code, developed by Deep Mind (Google), had been taught most known game situations and had played hundreds of thousands of games against itself. When ALPHAGO was pitted against the world GO champion, to everyone’s surprise, it became apparent that this code was developing its own strategies that had never been taught to it and were unknown to Sapiens. This is the success of deep learning, i.e. the transition to a very large number of nodes. Today, generative AI demonstrates every day that it can create texts, poems, paintings, music, etc., prove mathematical theorems, and so on.

2.2. Machine learning

Let us remember that AI is based on the topological definition of a network, whose links are weighted, defined by learning from a database consisting of a set of inputs and outputs, as large as possible (from a thousand cases to several million) and protected from bias as much as possible. However, the method does not reveal “how” the answer was obtained: it is a “black box” by design – just like our brain (Figure 3).

3. Why does AI work so well?

This question arises for AI in the same terms as it does for our brain. There is clearly a profound unknown here, which our most brilliant mathematicians are working on. Two avenues are often mentioned.

3.1. The multi-scale geometry of the neural network

Nature as we perceive it around us is in fact always multi-scale in nature. Take the example of sand (Figure 4). A grain of sand is generally composed of silica, a polycrystalline assembly on a nanoscopic scale. The grain of sand itself represents the microscopic scale. A dozen grains of sand are organised in chains or cycles of grains on the mesoscopic scale. Then a billion of these grains can form a pile of sand on the macroscopic scale, and finally a sandy beach represents the megascopic scale. A fundamental property of this multi-scale structure is the fact that the characteristics of the pile of sand are completely uncorrelated with those of the grain. This is one of the most fundamental properties of Nature: the emergence of new characteristics through changes in scale.

3.2. The properties of invariance described by the network

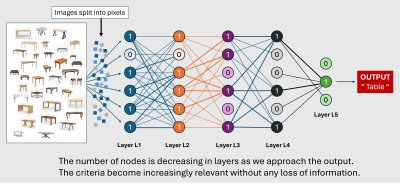

A second characteristic of neural networks is their ability to describe invariance, i.e. properties of a system that do not change in the representations/descriptions of that system.

Let us take the example of a table (Figure 5). We present thousands of tables to the network and then, if we show it a new table, it will be able to recognise it, regardless of the table’s position in space. Indeed, a table is invariant under translation and rotation (i.e. when the table is moved in space). The network, perhaps through negligible weights associated with certain links (which could be removed), manages to take this invariance into account and recognise a table regardless of its position in space. But mathematics teaches us that all invariance is linked to a group of symmetries. When we look at ourselves in a mirror, our face remains invariant, but the image we see is the symmetrical image of our real face in relation to the mirror. Indeed, the elementary particles of the quantum world are largely determined by groups of symmetries. For example, the theory of “supercords” (each particle corresponds to the vibrations of a nanocord in a 13-dimensional space) respects “supersymmetries”. Thus, it seems that a neural network is capable of taking into account symmetry groups in the same way as the real world.

4. A historical perspective

Another question naturally comes to mind: why does AI represent such a disruption for humanity – comparable to the discovery of fire or agriculture?

4.1. The era of analytical or numerical solutions

From these origins until the 1960s, a slow evolution, particularly through the Italian Renaissance (Figure 6B), gave rise to what is known as “linear” physics, in which phenomena are described by fairly simple equations that can be solved analytically using functions (1). These functions describe natural phenomena with a significant degree of approximation. Very few equations describing the real world can be solved in this explicit form. Nevertheless, this simplistic scientific corpus led to a tremendous revolution for humanity: the industrial revolution of the late 19th century. Buildings and machines multiplied. Probably the most important lesson to be learned from these centuries of scientific trial and error is that Nature has a language, and that this language is constituted by mathematics, which is intelligible to us.

4.2. How can this be done without equations?

However, most of the problems we encounter cannot be formalised in a mathematical expression using a system of equations. Let’s take a simple example from hydrology. We measure rainfall across the entire Drac river basin[2] using around a hundred sensors (rain gauges) and we want to know the water level of the Drac under the “Catane” bridge at the entrance to Grenoble (Figure 8). It is impossible to imagine formulating a system of equations linking the measurements from the 100 rain gauges to the water level under the Catane bridge. On the contrary, for AI, this problem is particularly easy to solve. Let us feed a neural network with the 100 sensor input values associated with the water level measured under the Catane bridge over a 30-year period. The network will characterise the relationship linking these inputs and outputs by calculating the weights associated with its links. And today, knowing the current values of the rain gauges, AI will provide us with the height of the water under the bridge with excellent accuracy.

5. Limitations of AI

The capabilities of AI are truly remarkable, but they do have their limitations in two areas, which we will discuss here.

5.1. The ability to compress data

The first relates to weakly structured problems where the associated database is presented in a “loose” manner, without it being possible to compress it. Data “compression” in the mathematical sense of the term is the operation of replacing a large amount of data with a small amount without significant loss of information. When we follow the neural network to its output, we gradually compress the data until we reach the solution. This is, for example, an operation that our brain performs when we read a text: we do not read the letters of each word in detail in order to understand a sentence. A few letters per word, deemed significant, are sufficient (and sometimes lead to errors that force us to go back and reread!). If data cannot be compressed, it cannot be learned. A recent mathematical theorem demonstrates the equivalence between compressibility (the ability to compress) and learnability (the ability to learn).

It is important to remember that data must be compressible due to its internal structure in order to serve as a basis for learning. Our brains are subject to the same limitation: we cannot learn a body of knowledge that is not organised. Our textbooks and the notes we take in class are organised into chapters, sections, paragraphs, etc. Otherwise, we retain nothing, because our brains have been unable to compress this data and therefore unable to learn it.

5.2. Sensitivity to initial conditions

Thus, for problems where mathematical analysis shows that there is no single solution beyond a certain time scale, AI will only be able to provide a probabilistic solution.

6. Towards conscious inanimate objects?

This is a question that is now being asked with great urgency: will we see conscious robots appear among the billion robots that are expected by the end of the century? To answer this question clearly, we would need to have a consensus definition of consciousness, which is not the case.

Let us say that many agree on distinguishing three levels of consciousness. The first level is that of consciousness, known as elementary consciousness, which corresponds to reportability, i.e. the ability to report. For example, I am in a lecture hall. We would say that I am conscious of the presence of the speaker and his presentation if, once I get home, I am able to describe the conference I attended. This level of consciousness is already achieved by existing robots, which are perfectly capable of summarising a conference, reporting the questions asked and even asking questions themselves (Figure 10).

The second level, which could be described as global awareness, is the one that allows us to synthesise different stimuli from various sources. Our brain effectively has such a “captain” who has an overview of the different neural networks associated with different stimuli and can prioritise one input over another. So, if, in the conference room mentioned above, I hear a loud noise outside, I will no longer listen to the speaker but will try to decipher the origin and meaning of the noise. This level of consciousness has probably already been achieved in non-academic laboratories.

Finally, the third level is that of phenomenal consciousness. This is linked to subjective consciousness, to the intimate emotional perception we have of the events and phenomena that affect us. It is likely that this level is currently out of reach, but many researchers believe that we will be interacting with fully conscious robots within the next decade. Already, robots’ faces can express a variety of subjective feelings and decipher potentially complex emotions on human faces.

Obviously, the prospects for these developments are both very appealing (Japanese children in hospital adore their robots, which will probably also be welcomed by the elderly) and terrifying, given the robots’ intelligence, which will be far superior to our own (according to the usual criteria and tests for measuring intelligence). Will Sapiens become ectoplasms, delegating tasks such as maintenance and development, … to robots?

7. The two classes of AI and their applications

There are two main classes of AI: “supervised” AI and “unsupervised” AI. These two classes are associated with two major fields of application for AI techniques, which we will now distinguish.

Unsupervised AI (Figure 11) is associated with research whose characterisation is not defined a priori. For example, searching for the possible presence of cancer cells in an image obtained by SCAN or MRI. Unsupervised AI mainly concerns the processing and exploitation of large databases. Mechanical or physico-chemical sensors are widely used today because they are robust, inexpensive and energy-efficient. They provide millions of data points due to their sheer number, but also because they generally remain in place for long periods of time. AI software will be able to detect the existence of internal structures or critical points (such as the appearance of a crack in a structure, which may not be visible to the human eye). Here too, some applications are very welcome, such as the fight against corruption or trafficking of all kinds, while others are more questionable, such as personalised marketing.

8. Natural hazards

This results in very large databases, derived from temporal and/or spatial series. It then becomes necessary to exploit these databases using intelligent techniques that can detect critical points of cracking/rupture/settlement/water leakage, provide an analysis of potential failure mechanisms and even – in the long term – recommend reinforcement or other measures to be taken. In fact, the only tricky point today is the machine learning phase, which cannot rely solely on cases of landslides or rockfalls that have actually occurred and been instrumented.

We are therefore led to supplement these databases with numerical calculations carried out by the digital twin mentioned above. The results of this digital twin, a carefully calibrated numerical model throughout the life of the natural structure (slope, cliff, etc.), can be compared by AI with the measurements provided by the sensors, thus providing accurate, quantified and rich information for analysing the behaviour of the natural system. This leads to “smart maintenance” of buildings or “smart monitoring” of natural structures.

9. Key messages

- Artificial intelligence is based on two pillars: (1) the definition of an artificial neural network and (2) the learning of this network through known cases, defined by inputs and outputs grouped into databases.

- AI is then able to solve any problem that can be learned within the scope of the database.

- The limitations of AI relate, on the one hand, to non-compressible fuzzy databases and, on the other hand, to problems without asymptotic solutions.

- A distinction is made between supervised AI (robots, etc.) and unsupervised AI (searching for critical values in a data set, etc.).

- The question of conscious inanimate objects opens up both fascinating and terrifying possibilities.

- Natural hazards, for example, are a field of application well suited to AI algorithmic techniques.

References and notes

Cover image. White robot , creation © Alex Knight, License CC0, via Pexel]

[1] The explicit resolution of equations is often limited to linear equations for which mathematical methods exist. Only a few non-linear equations have been solved analytically.

[2] The Drac joins the Isère river near Grenoble, and the Isère joins the Rhône big river further downstream, near Valence in the south of France.

The Encyclopedia of the Environment by the Association des Encyclopédies de l'Environnement et de l'Énergie (www.a3e.fr), contractually linked to the University of Grenoble Alpes and Grenoble INP, and sponsored by the French Academy of Sciences.

To cite this article: DARVE Félix (March 9, 2026), Artificial intelligence and natural hazard prevention, Encyclopedia of the Environment, Accessed March 28, 2026 [online ISSN 2555-0950] url : https://www.encyclopedie-environnement.org/en/soil/artificial-intelligence-natural-hazard-prevention/.

The articles in the Encyclopedia of the Environment are made available under the terms of the Creative Commons BY-NC-SA license, which authorizes reproduction subject to: citing the source, not making commercial use of them, sharing identical initial conditions, reproducing at each reuse or distribution the mention of this Creative Commons BY-NC-SA license.